|

eiffie

Guest

|

|

« Reply #15 on: November 15, 2013, 05:54:00 PM » |

|

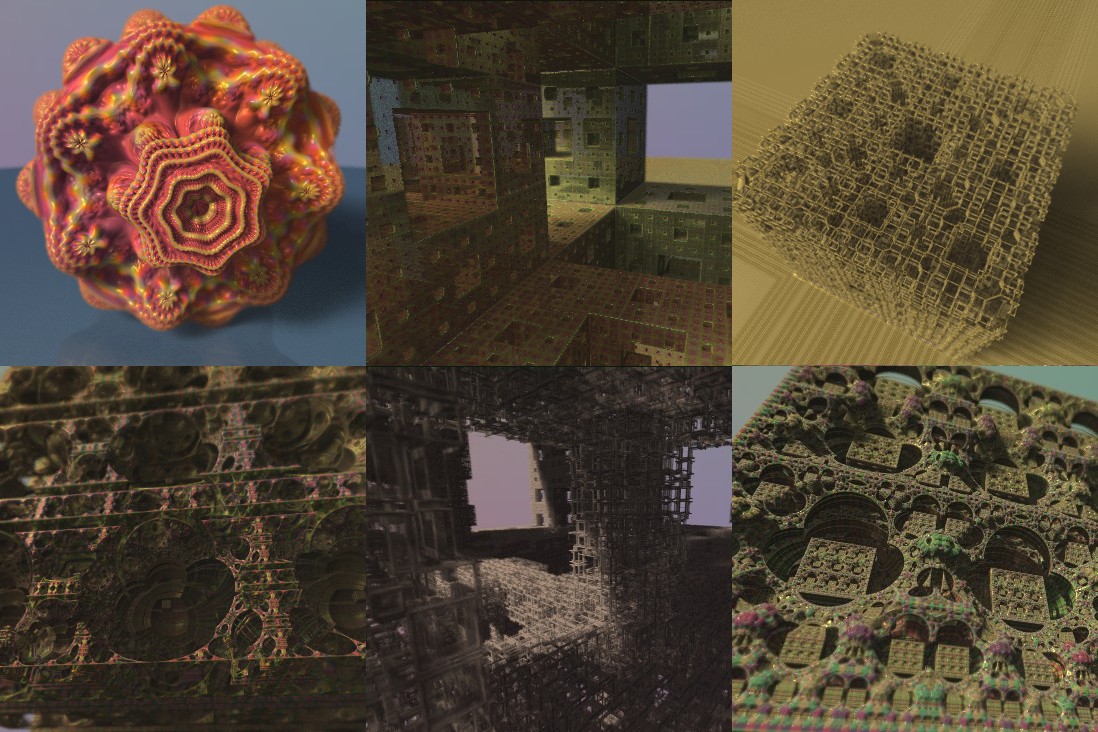

(my fuzzy shadows by the way)  I made a version that doesn't use 3d.frag. This technique still amazes me! Sometimes you just stumble onto things. It would benefit from a few AA samples just to minimize the speckling but for videos speckling is good. These samples are copied directly from the Fragmentarium window with no reduction in size.  The two attached files are: SoC.frag - the rendering engine, you include it instead of DE-Raytracer.frag testSoC.frag - shows how to properly implement the DE function. You now have to accumulate colors when the boolean bColoring is set to true. You add your color to the vec4 mcol. (mcol.a is for reflectivity) (updated the files as of 19 Nov 13) |

|

|

« Last Edit: November 19, 2013, 04:48:18 PM by eiffie »

|

Logged

Logged

|

|

|

|

|

3dickulus

|

|

« Reply #16 on: November 15, 2013, 07:33:11 PM » |

|

sweet! I didn't think you would take long or let someone else do that. it really has a look about it, I'm glad you put the engine together, I always end up with extra nuts and bolts  did I make a mess of it or what? pardon me  I was refering to the file that has "//V2 added some of knighty's improvements" in it, haven't looked at these yet but it will be another tool in the arsenal, as soon as I get over IQs clouds, literally and metaphorically. that's some real nice spaghetti you've got there. aside: I tried the formula for depth occlusion, the one from marius similar to the GL manual, that you so kindly pointed out, but it is still off, around the middle of the screen it's perfect but swing off to l/r/t/b and the depth values move away. I think it's something about polar coords, pixelscale, and the way things are calculated through a thin lens, it's so close, somewhere in there is the magic number that'll make it play nice between GL and Fragm. tnx again

|

|

|

|

|

Logged

Logged

|

|

|

|

knighty

Fractal Iambus

Posts: 819

|

|

« Reply #17 on: November 15, 2013, 08:31:18 PM » |

|

LOL! I'm stealing some credit!  Seriousely, all the hard work was made by eiffie. I've just made some suggestions. Thank you both eiffie and 3dickulus for providing the fragmentarium scripts.  Time for other remarks and suggestions: - The way rCoC is currently computed is a little bit wrong. it doesn't give a focal plane but rather a focal sphere. If rd is the ray direction for the current pixel and rd0 is the camera direction (that is the ray direction at the center of the screen) one have to multiply t by 1./dot(rd,rd0) in order to get the right result. - Another source of inaccuracies: The intersection of the traced cone with the screen is elliptical in general (it's circular only at the center). - Adding rCoC (or a fraction of it) to the DE was suggested while considering a signed distance function. The trouble with (all?) fractals DE is that the DE is not well defined or not defined at all. - This method works best with perfect or at least well behaved DEs. Otherwise artifacts can show up. - I think that physically "nearly accurate" lighting is really needed. - The central problematic of this method is IMHO the filtering (Of the DE, the coloring, the normals...etc). In the other hand it can itself be used as a filtering technique for multisample renderings (even path tracing ?) and would then give almost noise free pictures. - More to come  . |

|

|

|

|

Logged

Logged

|

|

|

|

|

eiffie

Guest

|

|

« Reply #18 on: November 15, 2013, 09:49:45 PM » |

|

3dickulus your script was a great motivator for me, not that it was a mess but it didn't make sense to me combined with the 3d.frag (which is montecarlo). I still have to add some kind of multisampling if people want to make stills with it. Feel free to continue building on top of it!!! Thanks again. Knighty you made a lot of good points but I have trouble with the first one. A camera doesn't have a focal plane (does it??). The lens focuses to a distance - it is just more noticeable in my scripts because I use a wide FOV. If I back the camera up and use a smaller FOV I believe it would be "picture perfect". Am I wrong??? probably. #2 seems similar to me but I may be missing something. For the "holiday tree" bulbs I had to back the camera up to make the bulbs more spherical. https://www.shadertoy.com/view/ldS3zW#3 I would agree with you but the results I'm seeing say it works anyways. A big improvement here was backing the sampling point up to the actual DE. I rarely see it break through the surface unless the DE is total crap (which some of mine are). The amazing box is a point cloud and it works well with no fudging. #4 agreed #5 waiting for Syntopia to get on board with this - lol #6 yes - my way was just what seemed fastest since it can happen every march step. I have been thinking about it too - at least using the middle sample but also not aligning the color sampling thru x,y and z but perhaps UU,VV (right and up directions of the ray march). But for now I like the speed of it. One improvement I can see is needed from my pics above is to reduce the specularity in the high DoF regions. They look wet and since bokeh highlights didn't work maybe just get rid of them.  Let me clarify why I use 0.25*rCoC for the DE add on, 1.0*rCoC for the distance check where you use 0.5, 2.0. Even though I called it rCoC I am thinking of it as the diameter (Aperture is a diameter) so my numbers are just half yours. You get the same thing either way by adjusting the aperture (which I just now learned how to spell  ). My choice of rCoC was poor but now it is GOSPEL! ...and I just realized that wasn't clear at all. |

|

|

|

« Last Edit: November 15, 2013, 10:03:23 PM by eiffie »

|

Logged

Logged

|

|

|

|

DarkBeam

Global Moderator

Fractal Senior

Posts: 2512

Fragments of the fractal -like the tip of it

|

|

« Reply #19 on: November 16, 2013, 05:53:52 PM » |

|

|

|

|

|

|

Logged

Logged

|

No sweat, guardian of wisdom!

|

|

|

|

Kali

|

|

« Reply #20 on: November 16, 2013, 10:42:55 PM » |

|

Oh I'm following this stuff by eiffie on shadertoy but I missed this thread! Amazing work eiffie! and thanks for the fragmentarium script, gonna try it now! Btw, that attempt knighty linked (you had to enable the DOF #define to actually see it), well was just a trick that works somehow fine with volumetric rendering, and it can be seen better here: https://www.shadertoy.com/view/XssGDn (mouse Y axis to change focus) But it's nothing like this! |

|

|

|

|

Logged

Logged

|

|

|

|

|

Syntopia

|

|

« Reply #21 on: November 17, 2013, 11:48:55 AM » |

|

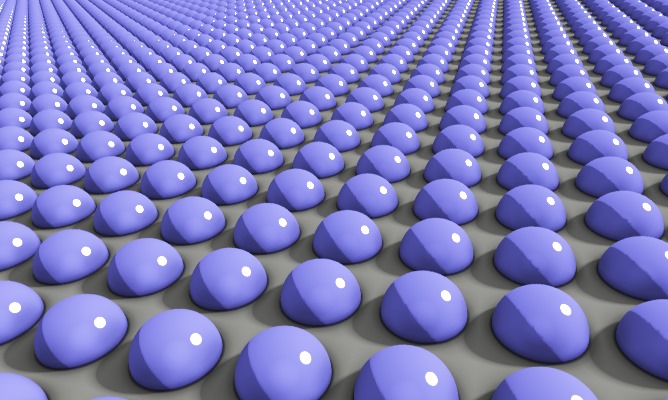

The DE-DOF looks exciting - I'll give it a try. I think the good question is whether you can do better with a screen-space method. For instance, here I written the depth to one of the color channels, and then when I draw the buffer in a second pass, I look at a grid of neighboring points (14x14 points), calculate their size based on the distance from the focal plane, and blend them to the current pixel (unless they are behind the current pixel, in which case they are discarded). This requires two shader passes, but is fast. I think you should be able to get similar effects to the very nice M3D DOF, where I believe all depths are stored, and then the pixels are drawn in sorted order and with a size based on the distance to the focal plane. DOF:  NO DOF:  Knighty you made a lot of good points but I have trouble with the first one. A camera doesn't have a focal plane (does it??). The lens focuses to a distance - it is just more noticeable in my scripts because I use a wide FOV. If I back the camera up and use a smaller FOV I believe it would be "picture perfect". Am I wrong??? probably.

Camera's have focal planes, not focal spheres. Try this "thin lens" model app: http://www.panohelp.com/thinlensformula.html |

|

|

|

|

Logged

Logged

|

|

|

|

|

eiffie

Guest

|

|

« Reply #22 on: November 17, 2013, 05:45:24 PM » |

|

I've updated the Soc.frag and TestSoc.frag files a bit. There are times when no normal can be calculated so I just cheat and use -raydirection. Kali - I saw that DOF and you were ahead of me on realizing you have to march thru objects to get it. Syntopia - Glad your looking at it. You are right that post processed DoF is fast and looks good but this has added benefits AA & soft shadows/reflections/refractions. (I haven't implemented proper reflections and refractions but it should be possible.) Post process DoF has some drawbacks. Reflections/Refractions are never correctly handled and real DoF sees around the corners of objects. This is my problem with IQ's soft shadows as well, the penumbra doesn't cut in behind the object. They still look good though so I get your point why the extra work when this method isn't realistic either. (I'm sampling a cone with spheres!) I am mainly excited about the AA and soft reflections but hey I'll take the DoF too! Thanks for the lens link but they confuse it by talking about focal distance too! -lol spheres are planes (locally)  Here are some WEBGL examples with added lights (regular and distance estimated) https://www.shadertoy.com/view/4s23zWhttps://www.shadertoy.com/view/lsjGzW |

|

|

|

« Last Edit: November 17, 2013, 06:47:39 PM by eiffie »

|

Logged

Logged

|

|

|

|

knighty

Fractal Iambus

Posts: 819

|

|

« Reply #23 on: November 17, 2013, 07:40:50 PM » |

|

Hey Syntopia, awesome dof! I'm also glad you are joining. and thank you for the link about thin lens model. That is indeed what I was refering to. As eiffie said, his method supports soft shadows and reflection (and probably refraction) which makes it extremely powerful. Anti aliasing also comes in naturally. it's an approximation and there are many kind of artifacts that shows up for moderate to high aperture but what amazes me are its robustness and that the noise due to the jittering disepears rapidly by lowring the fudge factor. I believe that using it with a path tracer would help reducing (dramatically?) the noise. (Ok! one would use a "roulette russe" method based on the opacity of the samples to choose the path but even then there should be noise reduction). Here is my tweaks to eiffie's shader. The approache is slightly different: at each step we sample the surface that intersects the current SoC. The opacity depends on the sample's surface covering the cone and its orientation. The marching step is also different. |

|

|

|

|

|

eiffie

Guest

|

|

« Reply #24 on: November 17, 2013, 09:02:03 PM » |

|

More great stuff Knighty! I like your using dot(N,-rd) for the mix so rays that graze the surface (taking more march steps) don't contribute more than the head-on colliders. I will mark mine up for your contributions, soon it will be more than mine!

|

|

|

|

|

Logged

Logged

|

|

|

|

|

Syntopia

|

|

« Reply #25 on: November 17, 2013, 10:31:52 PM » |

|

More great stuff Knighty! I like your using dot(N,-rd) for the mix so rays that graze the surface (taking more march steps) don't contribute more than the head-on colliders. I will mark mine up for your contributions, soon it will be more than mine!

I have played with the DE methods, and yes, the DOF is very nice - even for relatively large apertures. Eiffie, if you want a focal plane solution, like in Knightys shader, you can pass the (uppercase) 'Dir' variable (which is the same for all pixels) from the vertex shader in a varying variable, and calculate the rCoC as: float rCoC=CircleOfConfusion(t*dot(rd,Dir));//calc the radius of Code

|

|

|

|

|

Logged

Logged

|

|

|

|

|

eiffie

Guest

|

|

« Reply #26 on: November 18, 2013, 04:25:20 PM » |

|

Thanks Syntopia that is an easy fix! Will do.

|

|

|

|

|

Logged

Logged

|

|

|

|

|

|

|

eiffie

Guest

|

|

« Reply #28 on: November 25, 2013, 09:21:51 PM » |

|

Thanks for the link Knighty. I thought this script by TekF was interesting to see the different way he handled the alpha. It was missing the jittered step so it bands but still looks ok. https://www.shadertoy.com/view/4dBGzwYour way of using the surface normal to reduce the alpha works great but I also like how not using it fills in the background in so few marching steps. |

|

|

|

|

Logged

Logged

|

|

|

|

knighty

Fractal Iambus

Posts: 819

|

|

« Reply #29 on: November 26, 2013, 03:57:34 PM » |

|

I thought this script by TekF was interesting to see the different way he handled the alpha. It was missing the jittered step so it bands but still looks ok. https://www.shadertoy.com/view/4dBGzwLooks nice. I can't say I understand it but it looks nicer if one removes the "modulate by the length of the step" instruction.  Your way of using the surface normal to reduce the alpha works great but I also like how not using it fills in the background in so few marching steps. The surface normal is necessary to make it look OK. That's because the samples are discs. If you set fudgeFactor2=.25, the jittering (which costs cycles) can be removed depending on the aperture. The number of samples per ray is indeed a problem, especially with shadows... The less samples taken the faster. The other problem with "my" approache is that it doesn't handle correctly thin/small objects/features because of the assuption of flat samples. Your DoF algorithm is actually an integration algorithm. Maybe using higher order integration methods would give smoother results. Now, THE question That I'm wondering about: how to filter geometry+material if such thing is possible? My wild gess is it would be a kind of BSDF. If this is the case how could it be simplified in order to make it practical? This question is already adressed and partially answered in Cyrill Crassin's thesis ( GigaVoxel). Is it possible to go further? |

|

|

|

|

Logged

Logged

|

|

|

|

|