hobold

Fractal Bachius

Posts: 573

|

|

« Reply #30 on: August 13, 2015, 11:56:02 AM » |

|

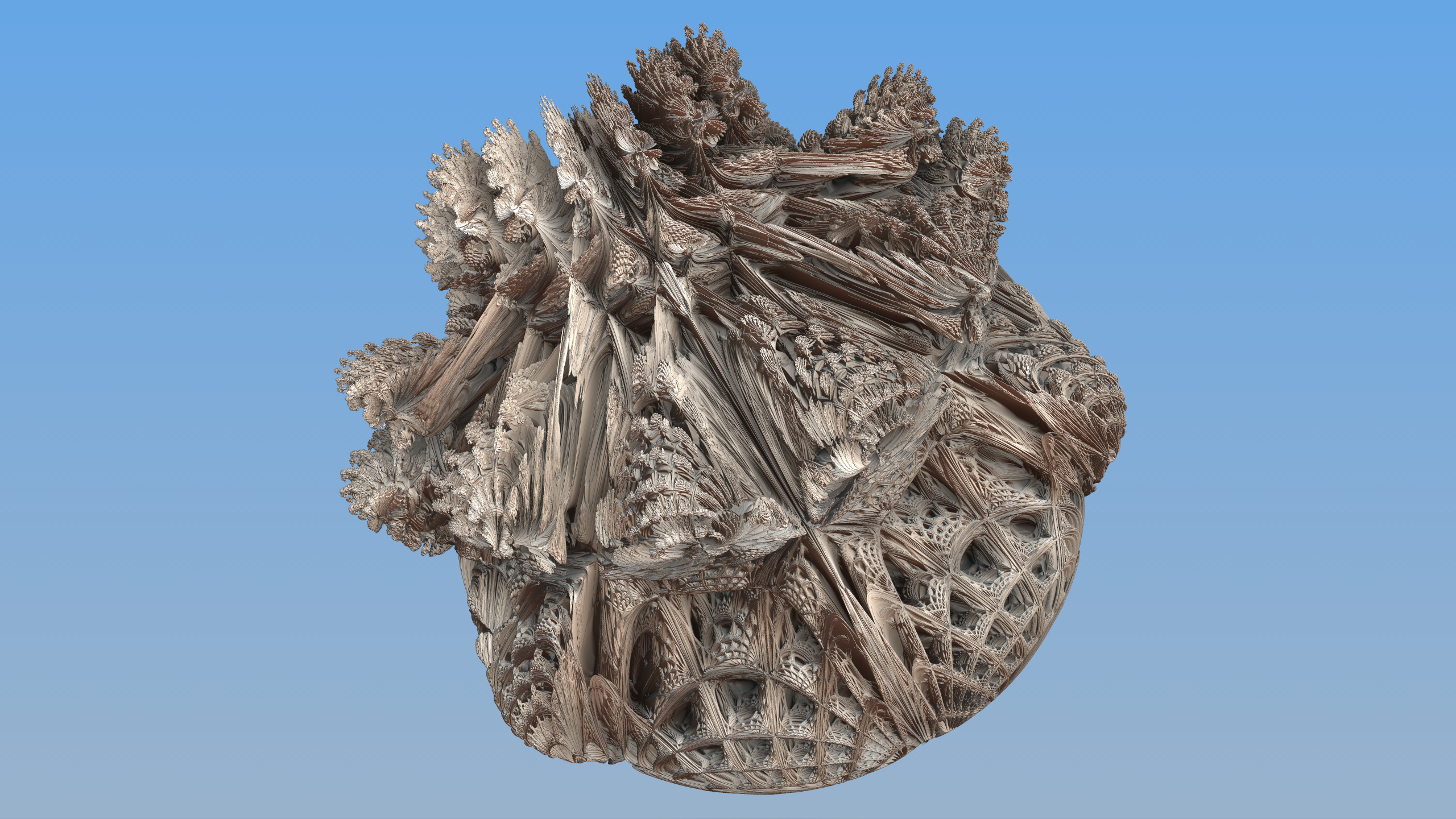

(Beware: replying to my ancient thread.) I rewrote my brute force renderer and updated lighting a bit. As a side effect, I made a new beauty shot of the Riemandelettuce:  I hadn't really planned to work on lighting this time around, but on further statistical improvements to the brute force rendering method. I have worked out the formulas for a sample biasing on steroids, but have yet to test it. The general idea is to compute the image from coarse to fine resolution. Then you can concentrate samples of unknown pixels at a distance of nearby hits. I'll be back with more information if this turns out to actually work. |

|

|

|

|

Logged

Logged

|

|

|

|

|

lycium

|

|

« Reply #31 on: August 13, 2015, 12:01:40 PM » |

|

Pretty impressive detail for a brute force render (I had to open the image in a new tab to see it 1:1 though); any details for us? E.g. CPU/GPU used, step sizes, ...

I'm working on a DE renderer and am quite unhappy with a lot of the rendering artifacts, especially when reflecting off smooth surfaces. I'm thinking DE to approach the surface alone won't be enough, one actually needs to go through the surface and then do root refinement (else reflections have steps).

Regarding your course->fine method, that sounds like a good way to do the primary rays, but for global illumination those are a very small % of the total rays cast, so it wouldn't help if you want high quality illumination.

|

|

|

|

|

Logged

Logged

|

|

|

|

|

3dickulus

|

|

« Reply #32 on: August 13, 2015, 03:14:52 PM » |

|

|

|

|

|

|

Logged

Logged

|

|

|

|

|

lycium

|

|

« Reply #33 on: August 13, 2015, 03:39:27 PM » |

|

Nah, I don't like hacks and tricks  My style is more "do it right, make it efficient, then throw lots of compute power at it"  I just plugged in a GTX 970 today, it's insane... you can do a lot with 4.4 teraflops of computing power! Example renders coming Soon (TM). |

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #34 on: August 13, 2015, 04:11:14 PM » |

|

I largely followed Syntopia's non-DE renderer described in this thread: http://www.fractalforums.com/3d-fractal-generation/rendering-3d-fractals-without-distance-estimators/with minor statistical improvements: golden sampling, bisection refinement, and sampling restart after hit. All in boring sequential C++ code (with multithreading across image rows, of course). Lighting is all done in screen space on the depth buffer, also largely following Syntopia's lead. In my case the depth buffer contains euclidian distances in world space (from eye to target point), not a z coordinate of some camera transform. Like my earlier images, most of the lighting is contributed by a simple dot product of the surface normal with the direction towards an infinitely distant light source (not Phong's algorithm, but effectively the same shading). Two small improvements made a huge visible difference. One is a highlight based on the reflected view ray. If the reflected direction comes close to the infinitely distant light direction, the overall color is faded towards pure white (there are the usual "roughness" and "intensity" parameters to control the size and strength of the highlights). Due to the fractal nature of the surface, this does not give the usual "glossy plastic" look, but has a tendency to brighten areas of pronounced tiny detail. The other small improvement that made a big difference to overall perceived "solidity" is an embarrassingly simple fake of global illumination. Based on the "up" component of the surface normal, I vary the color of the added filler light. Object parts facing upwards get blue-ish light (think sky), downward facing get reddish-brown (ground or floor), and surfaces facing the horizon get neutral grey (the average of "top color" and "bottom color"). Interpolation is linear between the three. Finally, the biggest improvement to the visible depth of the overall image, is a BLATANTLY WRONG and non-physical trick. Like Syntopia, I compute a fake occlusion factor that takes on values from 0.0 to 1.0 and selectively dims more distant parts of the surface if many neighbouring pixels are nearer. HOWEVER, I do not just multiply ambient light with occlusion. I also dim direct light with a down-weighed occlusion factor (i.e. an occlusion factor that is dragged a bit towards 1.0). This is BLATANTLY WRONG, but had the beautiful effect of making the direct lighting softer (i.e. now looking like an area light rather than a point light) and bringing out a ton of detail everywhere. So I must disappoint lycium again. No breakthrough here, just pretty fakes. They do hold up reasonably well in animation, too. But computation times are completely unreasonable at the quality level of the beauty shot above, so I have no re-rendered animations to show yet. As I wrote earlier, a potentially very effective speedup should be possible, but it'll take me another while to set up that experiment. |

|

|

|

|

Logged

Logged

|

|

|

|

|

lycium

|

|

« Reply #35 on: August 13, 2015, 04:26:02 PM » |

|

The problem with these fake lighting methods is that the shadows will be inconsistent and often swim around as your camera pans/zooms (esp without extra pixels on the edges, which no one does for some reason). And I can't say I'm disappointed, just curious  I'm from the offline rendering school, just a different approach. One thing though, for 3D fractals it's pure computation / FLOPs and almost no memory access if you do it straightforwardly. This maps well to GPU computing, and then suddenly CPU rendering becomes completely meaningless in the face of ~300 euro 4 teraflop GPUs. Once you've tasted that power, there is absolutely no going back... |

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #36 on: August 13, 2015, 07:31:44 PM » |

|

The problem with these fake lighting methods is that the shadows will be inconsistent and often swim around as your camera pans/zooms Fakes are fake, no way around it. But when I wrote that the fakes hold up well, I meant this: http://vectorizer.org/rmdltc/tumbleLow001.mp4Please excuse the low resolution and all the noise  and just pay attention to the very limited appearance of creeping shadow clouds and the like. It's nowhere near good enough for hollywood, but it'll suffice to go hunting for more formulas without proper distance estimates. |

|

|

|

|

Logged

Logged

|

|

|

|

|

KRAFTWERK

|

|

« Reply #37 on: August 13, 2015, 09:01:45 PM » |

|

Lovely render of the Riemandelettuce Hobold!

|

|

|

|

|

Logged

Logged

|

|

|

|

|

3dickulus

|

|

« Reply #38 on: August 14, 2015, 12:32:09 AM » |

|

@hobold that animation looks promising, a little rough around the edges but the lighting looks good.  @lycium hack or trick I think it's a deeper reinterpreting of the data for better image quality (rhf) and maximizing the opportunities at the end of the ray (soc). I have been debating weather I should get another GTX760 (SLI for 2) or to invest in one bigger card  |

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #39 on: August 17, 2015, 02:31:03 PM » |

|

Just a short update on the "coarse to fine" idea.

The important thing is that it works, albeit not in the way I would have liked. It is very effective against the "rough edges", and generally reduces noise in regions of "dusty" detail. Overall image quality improvement is equivalent to increasing the number of sample maybe four or five times, while processing time increases only 10% or so.

Unfortunately this speedup cannot be used to further reduce the number of samples; brute force rendering simply requires a minimal number of samples to work at all. But higher levels of brute force do see a speedup.

I should do a proper writeup eventually. But since this works so nicely, I'll present the basics here so that other programmers can start tinkering with the idea.

1. The algorithm is recursive. In every iteration, horizontal and vertical resolutions are doubled. (I implemented it the other way round: with a full resolution image buffer and a power-of-two stride to neighbour pixels. The stride is halved after each iteration, and the image buffer is filled from sparse to dense until finally all pixels have been set.)

2. At the beginning of one doubling, the pixel buffer conceptually looks as if it is made up of many small 2x2 tiles, of which the top left pixel is already set, and the other three will be computed.

3. The first of two steps is to compute the bottom right pixel of every 2x2 tile. For that we can use four existing diagonal neighbours, as those are all top left of their respective 2x2 tile.

4. The second step computes both the top right and bottom left of each 2x2 tile, with the help of four horizontal and vertical neighbours.

5. In both cases, I simply use the minimum distance (from the eye/camera) of the four neighbours to set up a function that biases sample density. Samples are concentrated around the minimum neighbour distance, but cover the ray from eye point all the way to the given maximum view distance. So if there is any small contiguous object in front of other structures, we have a much higher statistical chance to hit it with rays through adjacent pixels. This is particularly true for the boundary of any object, i.e. its outline in front of an empty background.

6. There is an optional "third of two" steps. We can now check the top left pixel of each 2x2 tile. If any of the newly computed eight neighbours is nearer, it might be beneficial to re-sample that pre-existing pixel with an appropriately biased sample distribution. I am doing this, but it didn't have a glaringly obvious effect.

Now for the hard part: biasing samples to cluster around a desired distance.

For the sake of efficiency, I chose the actual warping function to be a third degree polynomial. This is the lowest degree that can meet all required constraints, but has enough degrees of freedom left to choose focus distance and sample density at the focus.

Crap. I just notice that I don't have the finished formulas with me. The derivation was too complicated that I could do it again on the spot. I'll be back here within a few days ...

|

|

|

|

|

Logged

Logged

|

|

|

|

|

lycium

|

|

« Reply #40 on: August 17, 2015, 02:37:52 PM » |

|

Pretty sure I saw this described in the pouet raymarching thread (or one of them at least)... I think I saw you posting there too, or was it someone else? (definitely saw knighty there!)

|

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #41 on: August 17, 2015, 04:11:02 PM » |

|

No, that was not me. But the novelty is not in the recursive refinement, only in the specific use of neighbour's distances. Which I have yet to describe here (it involves inverting a rational function with an additional free parameter - could not have done that without Wolfram Alpha's help; cannot even remember the result offhand).

I am no regular on pouet, but I do recall a "paper" presented there which did something similar using distance estimation. They used the computed free distances not just to march along a single ray, but utilized the whole unbounding sphere to advance several adjacent rays, too.

|

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #42 on: August 18, 2015, 11:15:21 AM » |

|

I'll spare you the mathematical derivation and jump straight into code. But a few notes on intended usage first. 1. The scenario is that you start with uniformly distributed samples in the interval [0.0 .. 1.0] and want to concentrate samples around a given point p (also from [0.0 .. 1.0]) with a given sample density. 2. Biasing samples is cheap, but initializing a focused warp is expensive. Do not recompute a warp after each sample, but warp a few dozen samples to amortize this cost. 3. The code here fails when the focus distance is exactly 0.0 or 1.0, but works for all inner points of the interval. That's why there is an initWarp() without parameters; this is the old one that biases samples towards the hit point (the 1.0 end of the unit interval).

// encapsulates specific warps of density functions, which concentrate

// sample points near a desired focus point

class DensityWarp {

public:

DensityWarp() {

initIdentity();

}

~DensityWarp() {}

// warp sample points according to initialized polynomial

double warp(const double Value) const {

return ((a*Value + b)*Value + c)*Value; // d is implied as 0.0

}

// map desired height to required saddle position (internal)

double height2pos(const double Height) const {

const double& x = Height; // abbreviation

const double subExpr = cbrt(-2.0*x*x*x + 3.0*x*x +

sqrt(x*x*x*x - 2.0*x*x*x + x*x) - x);

return cbrt(2.0)*(9.0*x - 9.0*x*x)/(9.0*subExpr) - subExpr/cbrt(2.0) + x;

}

// map desired height and slope to required inflection position (internal)

double height2pos(const double Height, const double Slope) const {

const double& x = Height; // abbreviation

const double& s = Slope;

// common subexpression

const double cx =

cbrt(sqrt(4.0*cb(-3.0*s*s + 3.0*s - 9.0*x*x + 9.0*x)

+ sq(-54.0*s*s*x + 27.0*s*s - 54.0*x*x*x + 81.0*x*x - 27.0*x))

- 54.0*s*s*x + 27.0*s*s - 54.0*x*x*x + 81.0*x*x - 27.0*x);

return (1.0/(3.0*cbrt(2.0)*(-2.0*s - 1.0)))*cx

- (cbrt(2.0)*(-3.0*s*s + 3.0*s - 9.0*x*x + 9.0*x))/(3.0*(-2.0*s - 1.0)*cx)

- (s + x)/(-2.0*s - 1.0);

}

void initIdentity() {

a = 0.0; // initialize to identity mapping

b = 0.0;

c = 1.0;

}

// initialize to quadratic focus on far end

void initWarp() {

a = 0.0;

b = -1.0;

c = 2.0;

}

// compute polynomial with desired focus point and "infinite" density

void initWarp(const double Focus) {

const double p = height2pos(Focus);

const double denominator = 3.0*p*p - 3.0*p + 1.0;

a = 1.0/denominator;

b = -3.0*p/denominator;

c = 3*p*p/denominator;

}

// compute polynomial with desired density at desired focus point

// Slope should be 1.0/Density, where a Density of e.g. 5.0 means

// five times more samples than an unbiased distribution

void initWarp(const double Focus, const double Slope) {

const double& s = Slope;

const double p = height2pos(Focus, s);

const double denominator = 3.0*p*p - 3.0*p + 1.0;

a = -(s - 1.0)/denominator;

b = (3.0*p*s - 3.0*p)/denominator;

c = -((3.0*p - 1.0)*s - 3.0*p*p)/denominator;

}

double a,b,c; // cubic coefficients a*x^3 + b*x^2 + c*x (d always = 0)

};

|

|

|

|

|

Logged

Logged

|

|

|

|

hobold

Fractal Bachius

Posts: 573

|

|

« Reply #43 on: September 01, 2015, 08:44:45 AM » |

|

Thanks to the (approximately) fourfold speedup described above, it took "only" 16 days (on a total of ten processor cores) to render a high quality animation loop in FullHD resolution. It's still not perfect, but worlds better than my earlier attempts which were all so flat and sterile. Enjoy! http://vectorizer.org/rmdltc/tumbleHD002.mp4Now one can finally see the true amount of detail. While this isn't the holy grail, I find it interesting that there is visibly less whipped cream. Instead of losing fractal detail along both latitude and longitude, the Riemandelettuce is more like flaky pastry, losing detail along only one of the spherical axes. |

|

|

|

|

Logged

Logged

|

|

|

|

|